The benefits of an artificial intelligence and machine learning model assessment

Artificial intelligence and machine learning (AI/ML) models are storming the business landscape in nearly every sector; providing fast, powerful insights that can only be found in big data. Questions that were previously never thought possible to answer, are being answered. However, as powerful as these models are, they take a considerable amount of work to create with attention needed for every step. A small issue in any one of the steps can lead to biased or incorrect results. An external validation of a model can identify issues, find corrections or improvements and provide confidence in the data and insights gathered. The AI/ML model assessment delivers a variety of benefits, such as improved model performance, reducing biases and providing confidence in the model.

What is an AI/ML model assessment?

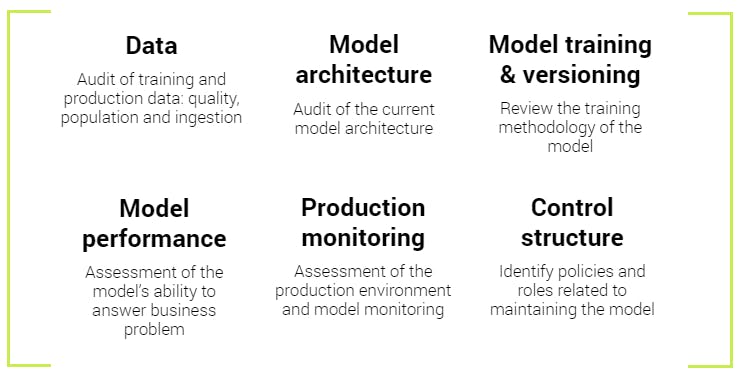

A model assessment is a thorough review of a model that utilizes AI/ML though discussions, code and documentation review and analysis of a model’s output. The model assessment process follows the entire life cycle of a model.

Each step of a model’s life cycle has decisions that can affect the outcome of the model. For the assessment we review each step; where we collect information about what was done, ask probing questions, conduct further research and provide written feedback for potential improvements.

Improving model performance

The performance of an AI/ML model can be measured in several ways: overall accuracy of the model, number of false-positives, compute time of the model, along with many other metrics. Regardless of the metric, a model’s performance can be hindered by sub-optimal choices at any step of the model’s creation. A full assessment can identify opportunities to optimize model performance in the current model and future iterations. Often data slowly changes over time, which can cause models to underperform compared to when they were first trained. An assessment includes discussion on techniques to monitor the ongoing performance and establish criteria for retraining or recrafting of a model.

The data science world is constantly evolving, new algorithms or techniques are always appearing. A model’s current algorithm might have been the best performing for the task as of a year ago, but a new algorithm might have come out of research in the meantime. Along with the current state model design, potential new techniques and algorithms will be discussed for future improvement of a model.

Addressing and reducing bias

As AI/ML models are incorporated into more of our world, biased models can have a significant impact on a person’s life such as, denied banking, issues during a hiring processes and even misidentification by law enforcement. Biased models could be impacting your revenue and affecting your business’s success.

Biased models, for instance facial recognition models, are in the world and commercially available from major technology companies, these bias issues happen to even very technically knowledgeable companies (1). As much as one can work to ensure an unbiased dataset, the world this data comes from is biased itself. For example, within the banking world, banking records can minimally include under-banked populations which skew the dataset incorrectly. Ensuring a dataset is not biased and assessing methods to remediate biased datasets is an important aspect of a model assessment.

There is a common saying about AI/ML models, “garbage in, garbage out”. This refers to the data used to train, poor data quality results in a poor performing model as a model learns from the data provided to it. In a similar vein, biased training data leads to a biased model. Data is one of the main areas that bias enters a model; a close inspection of the data source, data quality, population and data handling are all critical aspects of an assessment. Data inspection is one of the most critical aspects of a model assessment, as data is the foundation of a model and a good inspector pays especially close attention to the foundation.

However, data is not the only area that bias can enter, bias can enter during feature creation and data encoding. For instance, a fuzzy name-matching algorithm may perform differently on surnames of different origins, creating a bias towards certain populations. A step-by-step discussion of all aspects of a model can identify these potential pitfalls and methods to avoid them.

Gaining confidence from your model

AI/ML models are complex and can appear as a mysterious black box to even the most tech-savvy and knowledgeable stakeholder. Because of this, convincing an audience about the power of a model can be an uphill battle for the data science team that developed the model. An external validation of a model can increase confidence in the model, helping adoption of the model inside and out of a company. As a part of the assessment, a report on the model and the many aspects of it will be created for the model’s creator to distribute.

Discussing these complex models at length with external partners can help a data scientist look at the business problem in a different light. This allows them to considering things never thought of previously, solving the problem in a more effective manner or new adjacent business problems. This type of benefit is especially powerful during the COVID-19 pandemic, where collaborative efforts are difficult and have receded.

Moving beyond an assessment

Our Baker Tilly professionals are experienced in AI/ML model assessments and can add additional value beyond the model assessment with regulatory audits, creating SOC reports alongside a model assessment and providing data and cloud security assessments alongside an AI/ML model assessment. With our breadth of knowledge in securing data, navigating complex regulations and reporting – our practice professionals can help you achieve your business goals.